Skip to content

More people will create music than ever before.More new music will be created than in all of history.The music market will continue to shift from head-focused to a long tail of creators, powered by AI music-making tools.One of the top ten genres or styles of this decade will come from AI-enabled sounds.

More unique audio will be made this decade than in all of previous human history combined.

At least 8 of the top 10 most popular musicians of the decade will be real people.

Share

Explore

This link can't be embedded.

“AI will demolish the music industry.” If you attend a Silicon Valley machine learning meetup (virtually, these days), don’t be surprised to hear this proclamation or one of its siblings: “AI-generated music is the future,” or “AI will kill musicians.” Venture capitalist , “I actually think 10 years from now, you won’t be listening to music” — rather, he predicts you’ll only listen to personalized, AI-generated music.

There’s a kernel of truth in these grand statements. Generative deep learning technology is upending traditional media. Image and video are increasingly high quality, making it AI-generated imagery from real imagery. And audio isn’t far behind, with cutting edge tech able to or .

But instead of killing musicians, I see a more nuanced future for artificial intelligence in music. Over the next decade, I believe: 1) everyone can be a great DJ with AI, 2) AI will unlock novel listening experiences, and 3) human musicians are here to stay.

Prediction #1: everyone can be a great DJ with AI

Corollaries:

For professional musicians, expert tools like Ableton Live and Reason already make it possible (with a lot of skill and effort) to get a broad and precise range of output. The opportunity for deep learning in music is much like it is for AI everywhere: to make it cheaper and easier to do more powerful and complicated things. In particular, AI can reduce the costs of making compelling music so dramatically that an entirely new group of casual creators can make music for the first time. DJs already blur the line between curation and creation. Many famous DJs have never “written” anything, yet have a distinctive style and fan-base. AI offers the opportunity to do this even more profoundly and accessibly.

Collectively, AI tools will empower everyone to change the sound, feeling, and cadence of music as quickly as swiping. Endless variations and remixes are coming for every song, old and new.

AI technology will impact every stage of music creation, from revealing trends in today’s musical zeitgeist to helping generate lyrics, melodies, and compositions. I believe the tools around composition and melody generation have the most potential, as these areas require the most expertise and production cost today.

Composition

Add, remove, or interpolate between instruments, genres, moods, and tempos.

High-quality audio style transfer is coming, and it’s going to be awesome.

Just like style transfer ushered in a new wave of creativity for image creation, similar techniques will be groundbreaking for audio. Take an existing song, and change it to be EDM, country, or opera. Add or remove instruments, change the mood of your whole song, or make just your chorus “sadder” or faster.

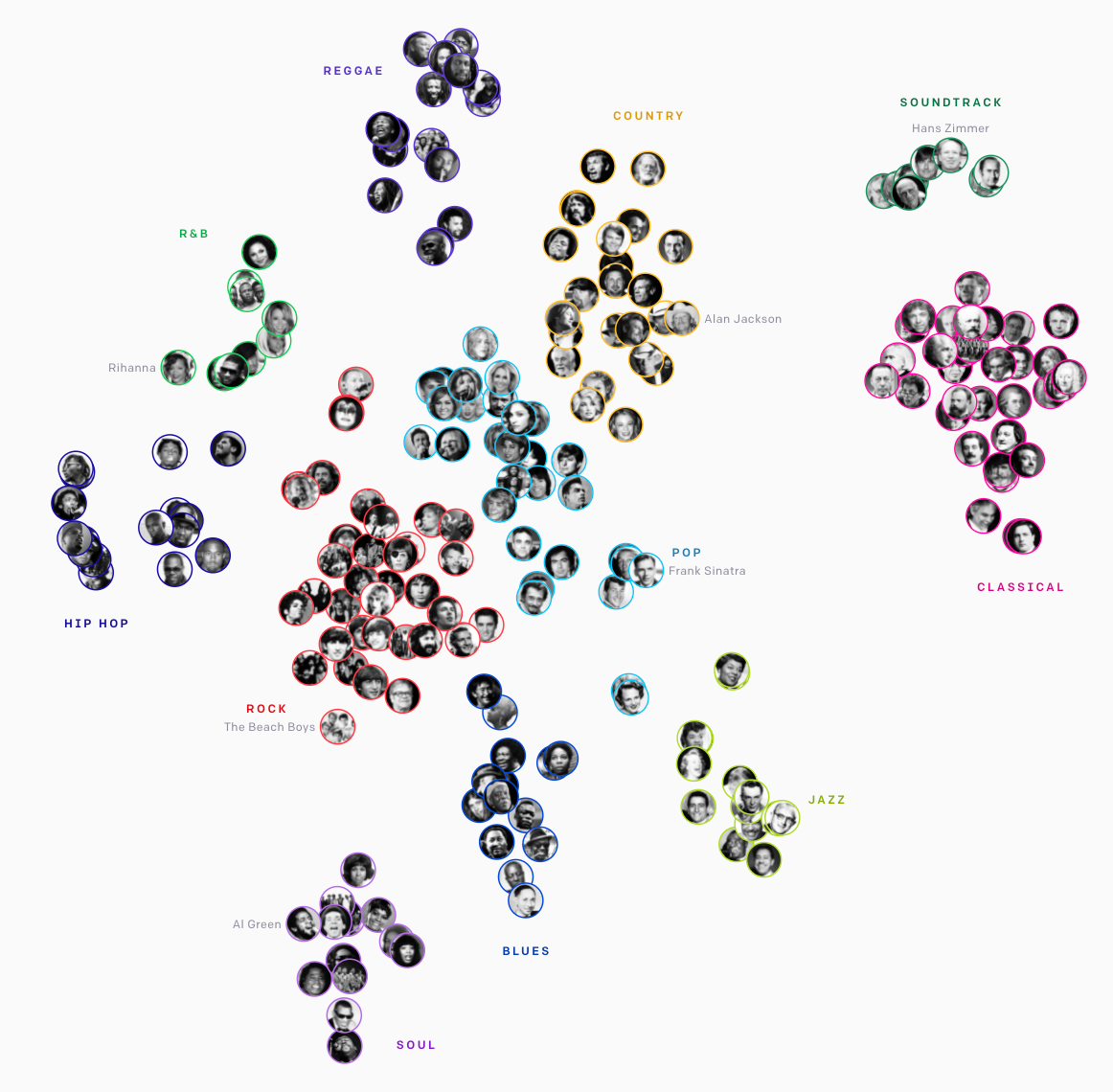

OpenAI’s Jukebox model learned to cluster music in an unsupervised way into styles based on their underlying content. Imagine dragging a song around this space to change its style.

AI will also change the most personal form of expression, the voice. Given written lyrics, . Or picture the next generation of , changing your voice to be 30% more like Kanye, Taylor, or your favorite singer. Tools like are already able to do an impressive voice swap with a well-trained celebrity voice.

Even further down the musical layers of abstraction, AI will invent new primitives. You can already , like making a half-harp, half-dog. In the same way that the synthesizer or Wah Wah Pedal created new genres, I believe one of the top ten genres/sounds of the next decade will come from these kinds of AI-enabled primitives.

Melodies

Generate, interpolate, or autocomplete melodies.

What makes AI tools so powerful for generating melodies isn’t just that they can create fresh tunes from scratch. It’s that they make it so much easier to go from an idea to a compelling result. They enable you to 1) take germs of ideas and flesh them out, and 2) take existing music and morph it into something new.

As someone who often comes up with snippets of melody in the shower, I’m particularly excited about tools that will take a short input tune as a jumping-off point and generate longer continuations. One initial approach is from Google’s AI lab , one of whose models can continue unfinished tracks, a sort of “autocomplete” for music.

Interpolating between multiple existing songs or melodies is another hugely powerful tool that turns creation into a browse experience.

lets you input up to four “seed loop” melodies in four corners and explore a grid of interpolations between them.

, made with a model. (Note this particular model was trained on MIDI files, so ignores instrumentation.)

Prediction #2: AI will unlock novel listening experiences

Corollaries:

AI will enable endless streams of audio deeply personalized based on taste, and spatial, temporal, or emotional context. This is one area where I partially agree with Vinod Khosla. While I don’t think AI-generated audio will replace human-made music, I do believe it will open up novel listening use cases. Especially for background music or , today’s models can already make endless plausible but uninspiring streams of songs. The future’s elevator music could be created on the fly and tailored based on time of day, weather, or even facial expressions of people listening.

I see these “infinite stream” models also moving into other non-music audio categories. Personalization can make it easier for people to get the . AI will be trained to play burbling creeks, faint chanting, or any other compelling niche sounds that might ordinarily be hard to get in large and diverse quantities. Similarly, many people use music to get pumped up, cool off, or become reflective. This new wave of AI tech will make it much easier to program your life’s ambient background and help you set your mood.

Prediction #3: human musicians are here to stay

Corollaries:

Music is like fashion. Music exists in a cultural context and moment in time and is paired with an engaged . Most musicians monetize through in-person concerts, which often feel like communal religious experiences. As fun as the movie was — in which the protagonist wakes up in a world where The Beatles never happened and becomes an instant success playing Beatles hits — it’s hard to believe all the hits would culturally resonate today the same way they did in their time.

Hatsune Miku is perhaps the most interesting exception that proves the rule: people like people. and has garnered hundreds of millions of YouTube views for her music.

Hatsune Miku is not alone in being a fictional front person, with , , and many others existing along a spectrum of fictional to real artists. But what they help illustrate is how clearly people appeal to fans: even a fake avatar is more appealing than no musician. And a computer-rendered musician is itself a fashion statement. I’m sure they’ll continue to be some successful virtual musicians. They are easy to work with (just program them to do what you want without any debate), and they don’t come with travel costs or timeline constraints for tours. But computer-generated musicians are only one type of fashion. Ultimately I believe people will continue to connect most with real people.

There’s long been a split in the music world between the “singers” (performers or music brand) and the “songwriters” who make the melody, lyrics, and composition. Hatsune Miku is one extreme example of a completely disjoint “singer” front-person brand who has no role in the songwriting. AI certainly creates the opportunity to split singers and songwriters further, but I believe the opposite effect will be even more powerful. AI-driven tools will remove barriers to being both the singer (brand) and songwriter (or AI-assisted DJ), enabling more people to become beloved musicians.

Challenges ahead

Across all types of media, these new types of AI-generated content raise societal and legal questions. How can we make sure these technologies are used to empower storytelling and speech, not ? if I can interpolate towards your work but not credit you, and you can’t prove your contribution? What responsibilities do distribution platforms like YouTube and Spotify have, and what are artist responsibilities? These are difficult questions we’re going to be grappling with for decades to come.

Thanks

Thanks for reading! I’d love your feedback, thoughts, or counter-arguments :-). You can find me at

, , or on . For their invaluable feedback on ideas & drafts, I want to thank , , , , and my family.

Want to print your doc?

This is not the way.

This is not the way.

Try clicking the ··· in the right corner or using a keyboard shortcut (

CtrlP

) instead.